Enterprise AI is undergoing a profound shift—from isolated use cases focused on productivity to a future defined by intelligent agents, verticalized platforms, and deeply embedded automation. The proliferation of large language models (LLMs) has catalyzed a new generation of enterprise software, moving beyond static chatbots and generic copilots toward AI agents that can reason, take action, and collaborate across complex workflows. As foundational models continue to improve in reasoning, cost-efficiency, and tool interoperability, we are approaching a clear inflection point in enterprise adoption. Furthermore we are witnessing the beginnings of a new era of multi-modal foundational models, which are enabling a new generation of robotics.

This paper outlines four key trends that we are excited about within Enterprise AI: from the rapidly evolving landscape of AI agents, the infrastructural breakthroughs enabling them, to the emergence of verticalized “Operating System” software, and the impact of new multi-modal modals on industrial robotics.

1. AI Agents are at an inflection point

The enterprise adoption of Large Language Models (LLMs) has evolved significantly, progressing through distinct phases. Initially, adoption centered on chatbot-like interfaces, providing human-like interactions to address user inquiries. Typical use cases included customer-facing support solutions (e.g., ServiceNow, Zendesk) and internal knowledge-management platforms (e.g., Glean, Guru). These solutions leverage Retrieval-Augmented Generation (RAG) to deliver contextually accurate information by retrieving relevant data.

However, enterprises increasingly require action-oriented solutions rather than mere question-answer interactions. This has given rise to a significant inflection point characterized by agentic systems—AI agents capable of autonomously executing tasks with minimal or no human intervention, thus streamlining enterprise workflows and enhancing productivity.

1.1 AI Agent Landscape

The AI agent landscape can be grouped into four main categories – together, these four layers define the evolving AI agent ecosystem; from horizontal copilots to deeply vertical solutions, supported by an emerging infrastructure and security stack.

General-Purpose AI Agents

General-purpose agents handle broad, cross-functional tasks and are typically developed by foundation model providers and big tech platforms. Examples include Microsoft Copilot and Google Gemini, which act as orchestration layers across their respective ecosystems (e.g., Azure/Microsoft 365 and Google Cloud/Workspace).

Foundation model companies such as OpenAI and Anthropic also provide tooling that enables developers to build custom agents on top of their LLMs.

Specialized AI Agents

Specialized agents focus on narrowly defined, high-value use cases such as:

- Coding: Cursor, GitHub Copilot

- Research & summarization: Google NotebookLM

- Enterprise knowledge & document intelligence: Glean

- Marketing & creative: Jasper AI, Canva

These agent-native applications deliver tailored workflows with strong user experience. Because many of these use cases are horizontal and require less industry-specific data, they present attractive entry points for startups.

Verticalized AI Agents

Vertical agents target complex, regulated industries where domain expertise and proprietary data create high barriers to entry. Key sectors include:

- Legal: Luminance, Harvey

- IP: RightHub

- Compliance: ComplyAdvantage

- Healthcare: Hippocratic AI, ScienceIO, Abridge

- Finance: SymphonyAI, AlphaSense

Incumbents or domain-native players (e.g., Raft.ai, Luminance) often have an advantage due to data access and regulatory know-how. Vertical agents can materially improve efficiency, accuracy, and compliance within their sectors.

Infrastructure Enablers

Infrastructure players provide the tooling required to build, deploy, and secure AI agents:

- No-code / low-code platforms: Druid.ai, Zapier, Coze, and Dify lower technical barriers and accelerate enterprise adoption. Compared to traditional RPA, these platforms offer greater adaptability and intelligence.

- Data curation & vector infrastructure: LlamaIndex, Pinecone, and Weaviate structure and manage data for AI-agent consumption

- Tool integration: Browserbase enables browser-based automation and real-time web interaction, while Wildcard simplifies API integrations—expanding agent capabilities beyond internal data.

- Monitoring & security: As enterprise adoption grows, security and governance are critical. The OWASP Top 10 for LLM applications (OWASP Report) highlights risks such as prompt injection, data leakage, supply chain vulnerabilities, excessive agency, and model theft.

Continuous monitoring and evaluation are essential to detect vulnerabilities and performance degradation early. Companies such as Langfuse and Haize Labs provide real-time monitoring and evaluation tooling.

Additional security-focused players include:

- Lakera AI – real-time AI security

- Lasso Security – LLM interaction protection

- Calypso AI – enterprise AI security suite

- Prompt Security – prompt injection defense

- Cranium AI – AI security infrastructure

1.2 Key Drivers of the Inflection Point

We believe the enterprise adoption of AI agents is reaching an inflection point, due to three converging trends:

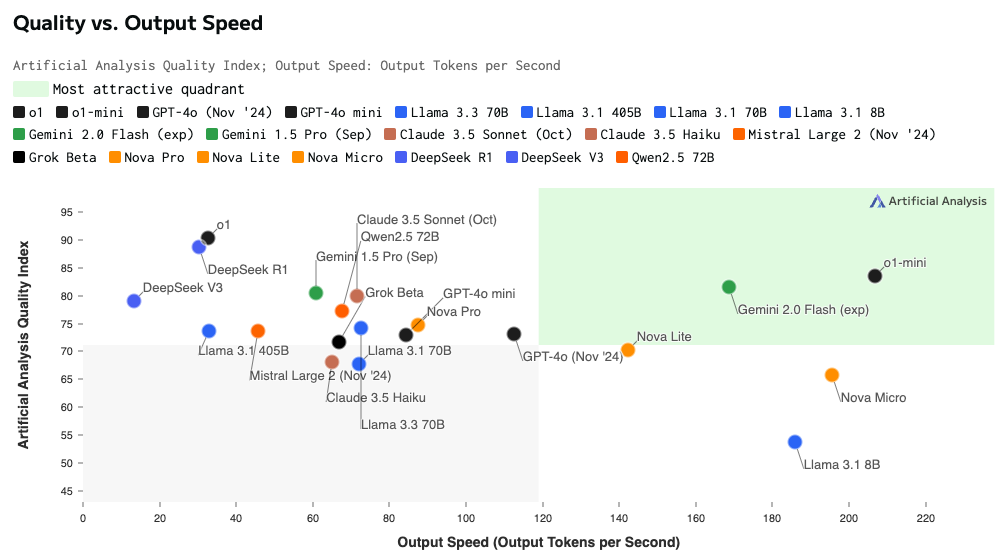

Slower but Stronger Models: The Emergence of Reasoning Models

Since ChatGPT’s launch in 2022, LLMs have scaled rapidly in data and context length. Instead of simply increasing size, newer models such as OpenAI’s GPT-o1 family focus on reasoning before responding, embedding Chain-of-Thought-style processing into the architecture. These models take longer to answer but perform significantly better on complex, multi-step problem-solving and planning tasks.

While early reasoning models were slower and more expensive, recent advances—across both proprietary and open ecosystems—are rapidly improving cost and speed. This makes reasoning-capable systems far more practical for real-world agentic workflows.

As a result, AI agents are becoming more reliable and production-ready: they can break down tasks, select tools dynamically, and execute structured workflows rather than generating surface-level outputs. Early vertical applications (e.g., in legal and compliance automation) already demonstrate how reasoning models unlock deeper workflow automation.

Source: https://artificialanalysis.ai/models

Open-source models catching up

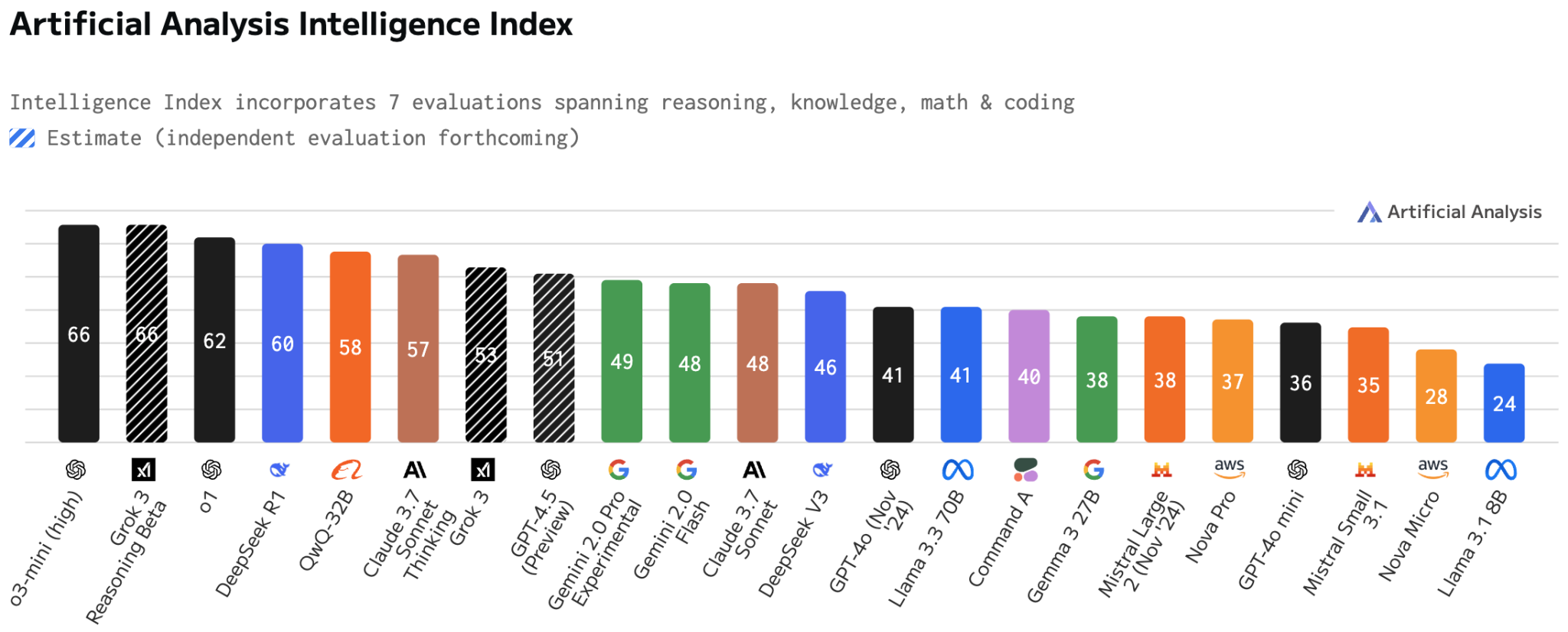

Closed-source leaders such as OpenAI and Anthropic initially dominated frontier performance. However, the open-source ecosystem has advanced at extraordinary speed.

Source: https://artificialanalysis.ai/models

As shown in the LLM leaderboard, open models are now approaching near-parity with proprietary systems like GPT-4o and Claude. Milestones such as Meta’s LLaMA 3.1 and DeepSeek R1 demonstrated that high-performance reasoning models can be delivered at significantly lower cost.

The competitive cycle is now measured in weeks, not years. Players such as Mistral, xAI, Alibaba (Qwen), and others continue to push performance while improving inference speed and efficiency. Key developments include:

- Alibaba’s Qwen/QwQ-32B (March 3, 2025): Matches DeepSeek R1 performance with even faster inference.

- Grok-3 by xAI (February 27, 2025): Briefly claimed the top leaderboard spot.

- OpenAI o3-mini: Quickly reclaimed the lead, showing how quickly innovation cycles are evolving

For enterprises – and especially startups building vertical agents – this shift is critical:

- Lower model costs improve unit economics

- On-premise and self-hosted deployment becomes feasible

- Fine-tuning for domain-specific workflows becomes more practical

- Data sovereignty requirements in regulated industries can be met

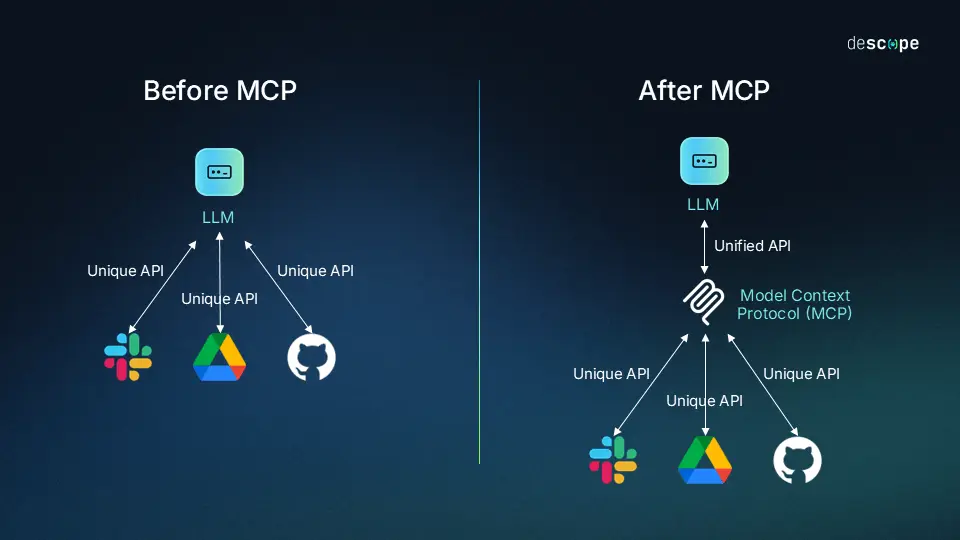

USB port for AI agents: MCP (Model Context Protocol)

LLMs act as the brain of an agent system—but to execute real-world actions, they must connect to external tools, APIs, and live data sources.

Today, integrations are handled through fragmented APIs, each with unique schemas and authentication logic. This creates scalability and reliability challenges for agentic systems.

Anthropic’s Model Context Protocol (MCP) introduces a standardized middleware layer between models and tools. Instead of bespoke integrations, applications expose capabilities (e.g., “book meeting,” “get weather”) to an MCP server, which handles routing and formatting. Agents interact through a unified interface.

MCP effectively functions as a USB port for AI agents:

- Plug-and-play tool integration

- Standardized security and access control

- Decoupling between models and tools

- Easier model switching

- Foundation for multi-agent ecosystems

For infrastructure builders, MCP-aligned orchestration layers or connectors could become foundational components of the AI stack—similar to how Twilio standardized communications APIs or Stripe standardized payments infrastructure.

Source: https://www.descope.com/learn/post/mcp?ref=jeffreybowdoin.com

These three forces – reasoning-optimized LLMs, rapid open-source progress, and standardized integration frameworks like MCP – are converging to push AI agents beyond experimentation and into enterprise production.

- Reasoning models provide deeper intelligence

- Open-source advances drive cost efficiency and flexibility

- MCP-like standards enable scalable, secure integration

Together, they enable agents that are smarter, more interoperable, and easier to deploy in complex enterprise environments.

We expect AI agents to transition from copilots and prototypes to core operational infrastructure—redefining how enterprises automate workflows and operationalize intelligence at scale.

2. The next phase of Agentic AI: Towards a Fully “Agentified” Enterprise

2.1 What’s Next in Agentic AI?

The evolution of AI agents is increasingly shaped by four core design patterns:

- Planning – Breaking complex tasks into structured, multi-step workflows using reasoning.

- Reflection – Evaluating and refining outputs based on prior results to improve reliability.

- Tool Use – Extending capabilities through APIs and external systems.

- Multi-Agent Collaboration – Coordinating multiple agents toward a shared objective.

Recent reasoning models (e.g., GPT-o1, DeepSeek R1) have effectively embedded planning into the model layer. Tool use is becoming standardized via frameworks such as MCP.

Reflection, however, largely remains application-level logic—implemented manually by developers (e.g., Devin’s ability to test and iterate on code). The key constraint is latency: reflection increases compute time. As hardware improves and architectures evolve, we expect reflection to move into the model layer, increasing autonomy and reliability.

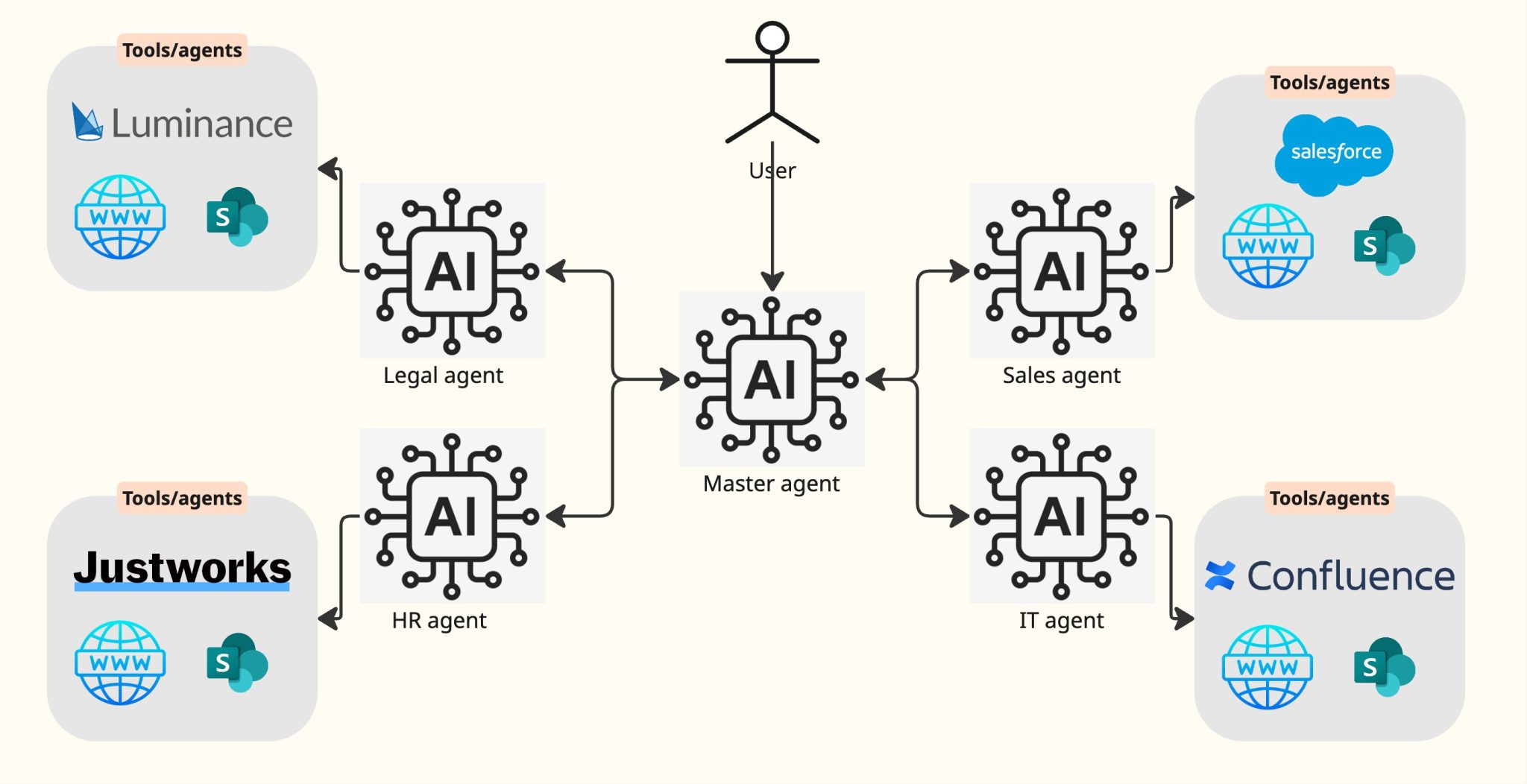

The next frontier is multi-agent collaboration. Today, most agents operate in silos, automating narrow tasks. In the future, agents will function more like digital teams—collaborating across systems. A developer agent, for example, could coordinate with testing, BI, and project management agents to autonomously ship and validate a feature.

This points toward AI-native operating systems, where software consists of interoperating agents rather than static applications. Early foundations are likely to be laid by platform players such as Microsoft (Copilot ecosystem) and Google (Gemini stack), which are building orchestration layers across enterprise workflows.

2.2 What If Everything Gets Agentified?

Looking ahead, AI agents are likely to become a foundational layer of enterprise software, reshaping departments and workflows over the next decade.

In this future, each enterprise could operate a master orchestrator agent—one that understands user intent, delegates tasks to specialized agents (HR, Finance, Sales, Legal, etc.), and synthesizes outputs into a coherent, human-centric response. Much like operating systems today coordinate multiple applications, tomorrow’s platforms may manage a dynamic ecosystem of interoperable agents, each responsible for a specific function but integrated within a unified framework.

The interface layer is still evolving. While early implementations are largely chat-based, the long-term direction points toward multimodal environments—blending voice, structured UI, search, and workflow orchestration into a seamless control layer for enterprise intelligence.

2.3 Implications

As this transformation unfolds, we see opportunities in two key categories:

- Agent-Native ApplicationsThese are products built from day one around agentic workflows. While still early, this is where we expect long-term disruption. As infrastructure matures, agent-native startups can redesign entire verticals with fundamentally more intelligent and automated workflows.

- Agentification of Existing SoftwareIn the near term, incumbent SaaS players will embed reasoning models, integrate external tools, and adopt agentic interfaces to remain competitive. This transition phase will likely drive the majority of short-term adoption—and investment opportunities.

At Forestay, we continuously track how companies position themselves along this curve — from early experimentation with agents to deeper integration or full transformation. Winners will have: a clear agentic roadmap, modular architectures and interoperability with broader agent ecosystems.

3. Industry Operating Systems: The Emergence of Increasingly Verticalized AI

In the cloud era, vertical software leaders such as Procore ($11B), Toast ($21B), and ServiceTitan ($9B) followed a repeatable model:

- System of record – Centralized customer data

- Payments & billing – Embedded financial workflows

- Marketplace – Ecosystem consolidation

Agentic AI adds a new layer: a System of action, i.e. AI-driven automation of complex, labor-intensive workflows

We are witnessing a shift from systems of record to systems of action. Defensibility increasingly comes from specialization and orchestration of AI agents—naturally favoring vertical operating systems.

Vertical specialization drives defensibility because:

- Niche industries prefer deeply integrated, purpose-built vendors

- Domain expertise and data create compounding advantages

- AI now makes “custom” solutions scalable

The opportunity extends beyond productivity gains. U.S. labor spend (~$11T) dwarfs the ~$450B enterprise software market—suggesting AI agents could unlock value far beyond traditional SaaS TAM.

3.1 Implications

A key implication of this is that we are seeing increasing AI adoption in verticals that have historically been underserved or difficult to penetrate with software. AI is now unlocking entirely new use cases in several verticals, including (but not limited to):

- Legal: Legal tech has historically been a challenging market for software adoption but this is set to change with the rapid spread of Gen AI across many legal workflows. We see significant potential, particularly for companies that have established strong GTM motion and are building compounding data moats. Data security and trust also remain key drivers for AI adoption at scale in this industry

- Forestay recently invested in Luminance, a leading player in Legal Contract Lifecycle Management (CLM). Luminance leverages AI to streamline the entire contract lifecycle — from drafting to negotiation and execution.

- Building Materials: the building materials industry remains a largely untapped market for software. To date, most software solutions have focused on project management (e.g., Procore), but there is no dominant platform optimizing pricing, inventory levels, and assortment for building materials and home improvement. Forestay recently invested in Datavations, which is addressing precisely this gap. By applying AI to solve unstructured data problems, Datavations is helping large building manufacturers in the U.S. capture significant annual contract values (ACVs). This represents a massive greenfield opportunity.

- In logistics: another industry that has traditionally relied on manual processes and email communications — making it ripe for AI-driven transformation. Raft AI is a strong example of this shift. By building the “freight forwarding OS,” Raft AI integrates AI across key operations, including document processing, payments, and invoicing. This automation is driving significant efficiency gains and positioning Raft AI as a category leader in logistics. The building materials industry remains largely untapped by software.

With AI rapidly spreading across enterprise workflows, we believe that winners will be those that successfully:

- Specialize in high-value, complex workflows

- Orchestrate AI agents to create a seamless user experience

- Build defensible moats through data and network effects

- Crack a scalable GTM motion

4. The Rapidly Evolving Landscape of AI Enabled Industrial Robotics

4.1 Multi-modal models will enable the next generation of industrial robotics

In 1994, Steven Pinker observed that “the hard problems are easy and the easy problems are hard.” That paradox feels even more relevant in the post-LLM era. Summarizing vast amounts of text is now trivial for AI, yet simple physical tasks—like folding clothes—remain extremely difficult for robots.

Still, AI is reshaping robotics. The emergence of multimodal spatial models, particularly Vision-Language-Action Models (VLAMs), marks a major breakthrough. By combining visual perception, language understanding, and action, these systems allow robots to interpret their environment and act using multiple information channels simultaneously.

4.2 VLAMs

VLAMs enable zero-shot reasoning in physical environments—allowing robots to execute tasks they were not explicitly programmed for. This represents a fundamental shift from rigid, pre-programmed automation toward adaptive, instruction-driven systems.

In industrial settings, this could unlock substantial efficiency gains. Instead of deploying multiple task-specific robots, manufacturers could operate more general-purpose systems capable of adapting via natural language instructions and visual context—handling complex assembly, adjusting to production changes, and collaborating with human workers.

However, major constraints remain:

- Model progress outpaces tooling and applications

- Data scarcity: Unlike LLMs trained on internet-scale text, VLAMs require large, high-quality multimodal datasets capturing physical interactions—data that is far harder to obtain

- Hardware economics: Advanced sensors, actuators, and compute infrastructure require significant upfront investment, challenging unit economics—especially for smaller operators

We estimate VLAMs remain 4–5 years away from achieving LLM-level maturity.

4.3 Implications

These constraints create a strategic opportunity—particularly for Europe, where industrial manufacturing depth meets world-class AI research.

Progress will depend on close collaboration between hardware manufacturers and cutting-edge AI labs. Europe’s industrial base, combined with institutions such as École Polytechnique, SupAero, EPFL, and ETH, creates strong conditions for applied robotics innovation—especially in aerospace and automotive, where leaders like Airbus, Volkswagen, and Stellantis can drive early integration.

We expect public-private partnerships between industry and academia to accelerate as the ecosystem matures. For Forestay—where nearly 30% of investments originate from university spin-outs—this represents a meaningful long-term opportunity.

AI-enabled robotics remains early, but as multimodal models mature and tooling catches up, the shift from static automation to intelligent, adaptive manufacturing systems could redefine industrial productivity over the next decade.

5. Conclusions & Forestay Investment Thesis

Enterprise AI is at a clear inflection point. What began as isolated productivity tools is rapidly evolving into an ecosystem defined by intelligent agents, vertical operating systems, and the early foundations of multimodal robotics. Improvements in reasoning‑capable models, the acceleration of open‑source innovation, and emerging standards like MCP are making AI systems more reliable, more interoperable, and far easier to embed into real enterprise workflows. As a result, AI is shifting from answering questions to executing work.

This transition is reshaping the software landscape. In the near term, incumbents will “agentify” their products, embedding automation, tool use, and planning into existing systems. Over time, vertically specialized platforms—built around proprietary data, domain expertise, and agentic orchestration – will become the new operating systems for complex industries. Meanwhile, multimodal models are setting the stage for more adaptive industrial robotics, even if widespread deployment remains several years away.

Across all layers, the direction is consistent: enterprises are moving toward AI-native architectures where agents collaborate, reason, and act across workflows end‑to‑end. For investors, this creates meaningful opportunities both in emerging agent‑native applications and in vertical platforms where automation can unlock step‑function improvements in efficiency, accuracy, and labor productivity.

The winners will be those who combine strong domain grounding with agentic design, build for interoperability rather than closed systems, and leverage compounding data advantages. While many technical and operational challenges remain, the trajectory is unmistakable – the enterprise is becoming increasingly automated, increasingly agent-driven, and increasingly shaped by multimodal intelligence.

References and Resources:

- CB-Insights_AI-Agent-Trends-To-Watch-2025

- https://aiagentsdirectory.com/landscape

- https://artificialanalysis.ai/models

- https://www.openapis.org/

- https://www.descope.com/learn/post/mcp?ref=jeffreybowdoin.com

- https://devin.ai/

- https://owasp.org/www-project-top-10-for-large-language-model-applications/

- https://openai.com/index/learning-to-reason-with-llms/

- https://zapier.com/blog/prompt-engineering/#techniques-examples

- https://github.com/protectai/rebuff#features

- https://www.bvp.com/atlas/state-of-the-cloud-2024#Trend-4-Vertical-AI-shows-potential-to-dwarf-legacy-SaaS-with-new-applications-and-business-models

- https://arxiv.org/abs/1901.08579