💡Moravec’s Paradox (1988)

It is comparatively easy to make computers exhibit adult level performance on intelligence tests or playing checkers, and difficult or impossible to give them the skills of a one-year-old when it comes to perception and mobility

In recent years, we’ve begun to see the transformative potential of AI products across a wide range of industries due to the advancements of AI in language and vision. But one crucial modality has largely been left behind: physical action. For decades, progress in robotics has lagged behind other fields, constrained in part by Moravec’s Paradox (above) — the idea that high-level reasoning requires less computational effort than low-level sensorimotor skills.

Today, that dynamic is beginning to shift. Recent advances in AI are breathing new life into the field of robotics, challenging long-held assumptions and opening the door to meaningful progress in physical intelligence.

This paper explores the emerging opportunity in intelligent robotics, providing an overview of the current Robotics AI ecosystem, key challenges, and a recommendation for how Forestay should position itself within this evolving space.

1. The AI opportunity in Robotics

1.1 Why we are excited about the space

An enormous market opportunity that remains untapped

It’s no surprise that the economic potential of intelligent robotics is enormous. What did surprise us while writing this paper, however, is just how large that potential is — especially within industry and industrial manufacturing.

Take manufacturing automation as an example. Even at the most advanced facilities, automation tops out at around ±40%, meaning that ±60% of the work is still done by humans. And that’s the high end of automobile manufacturing – the most automated sub-sector of manufacturing. On average, factories operate at only about 20% automation. This means the vast majority of tasks remain human-led — highlighting a massive, untapped opportunity for automation through robotics.

So far, AI has made its biggest strides in information services, where it typically provides “nice-to-have” enhancements to human-in-the-loop tasks (e.g. AI agents for contract drafting, document review, etc). But it has been largely absent from the physical world — the domain of “must-have” functionality, where reliability, precision, and impact are critical. Unlocking substantial advances in AI-powered robotics would allow AI to move beyond the digital realm of bits into the physical world of atoms — opening up an entirely new frontier of market opportunity.

The next wave of AI will be rooted in physical models

At the Davos conference in January 2025, Yann LeCun, Chief AI Scientist at Meta, declared that this decade would be the “decade of robotics” and predicted the rise of “a new breed of AI capable of understanding the physical world.” It was an exciting endorsement from one of the field’s leading thinkers — but LeCun is far from alone. A growing number of top AI researchers now believe that the current LLM-centric paradigm will likely give way — within the next decade — to architectures based on “world models” that are grounded in a deep understanding of physical reality.

While the exact form of these future architectures remains speculative, we believe that robotics will play a central role in shaping them — and that this possibility deserves our attention, even if, as we will discuss later on, it may not be actionable for Forestay in the short term.

1.2 Is Robotics having its GPT moment?

The promise of horizontal robotics platforms

The promise of horizontal robotics, or the emergence of a general-purpose robotics platform —analogous to what the personal computer and smartphone were for computing — that can serve a wide variety of tasks across industries, is what is driving the current wave of excitement around intelligent robotics.

Unlike today’s robots, which are often custom-built for narrow, repetitive tasks in structured environments, a horizontal platform would enable robots to perform diverse, unstructured, and dynamic tasks, adapting to different use cases with minimal redesign. This shift is being driven by advances in AI, especially in foundation models, which allow robots to better perceive, understand, and interact with the real world. The vision is that this foundational layer will unlock new applications, lower the barrier to entry for developers, and spark an ecosystem of hardware and software innovation, making robotics far more flexible and scalable.

💡

The central idea behind the rise of the current intelligent robotics wave is that robotics foundation models have the potential to deliver benefits similar to those seen in other domains of AI. More specifically, it suggests that the scaling laws observed in modalities like language and vision will also extend to robotic actions, enabling significant gains in capability as models grow in size and complexity.

While this is a bold and forward-looking vision of the future of robotics, venture capital funding in the field has already begun to validate it.

Funding & talent are flowing into intelligent robotics

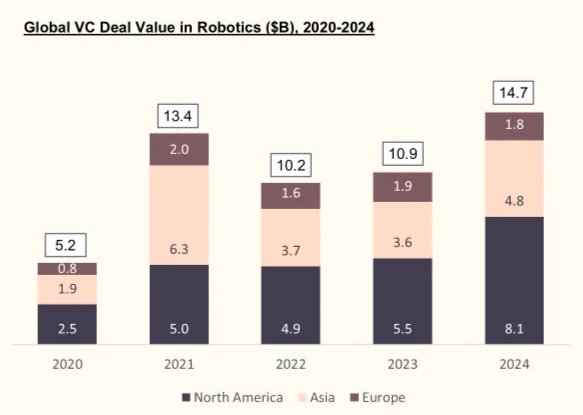

Source: Pitchbook

The robotics space benefited from considerable traction on the VC funding front with a notable spike in 2021, a drop in 2022 (as the VC market pulled back) but rebounding quickly in 2023 before marking the sector’s best performance ever in 2024 with over $14.7B invested. Lifting the hood on 2024 this exceptional result was partially driven by the following:

- Physical Intelligence, Series A ($400M round, $2.4B post-money)

- Skild AI, Series A ($300M round, $1.5B post-money), the company subsequently raised a $500M Series B in January 2025 and was valued at $4B post-money

- Figure AI, Series B ($675M round, 2.6B post-money), the company subsequently raised a $1.5B Series C in January 2025 and was valued at $39.5B post-money)

- Skydio, Series E ($425M round, $2.5B post-money)

- The Bot Company, Seed ($150M, $550 post-money), the company subsequently raised another $150M at $2B post-money in March 2025

- Zipline, Series G ($350M round, $5.15b post-money)

- Anduril, Series G ($1.5B round, $14B post-money)

- Sereact (€25M Series A led by Creandum)

All things combined, the recent VC funding activity in the sector sheds some light on the genuine belief in a future enabled by robust and reliable robotics, but also on the potential value that can be unlocked along the way. Equally, talent has been flooding into the Robotics AI space from top universities and corporates as the space continues to heat up. Recent high-profile departures of professors from top-tier technical institutions (MIT, Stanford, Berkeley, CMU) and talent from leading robotics teams at companies like Tesla, Cruise, and Amazon to launch their own intelligent robotics startups signal a clear inflection point in the momentum and excitement surrounding the field. While exact statistics on the flow of talent into the space are limited, other indicators can be used to justify this:

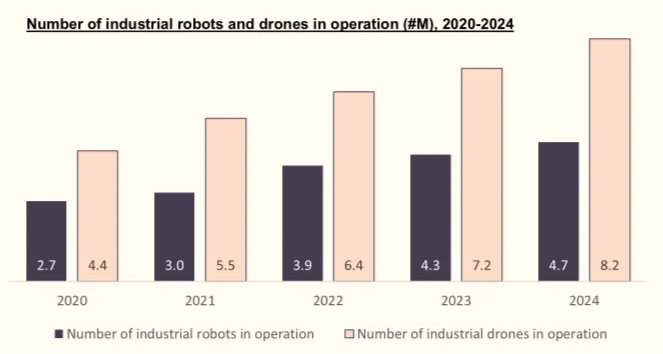

Source: Statista, The Robot Report

A clear and steady increase in the number of industrial robots and drones in operation indicates increases in sophistication, production and adoption of said machinery, which all come with underlying talent requirements. With the robotics space still perceived to be in its infancy, particularly due to unsolved technical challenges, talent requirements increase every year.

This growing excitement has also reached broader levels of academia, as evidenced by the rapid expansion of initiatives like the FIRST Robotics Program. Participation in the program grew from 1,200 students in 2020 to an impressive 785,000 in 2024, reflecting a remarkable surge in interest among younger generations.

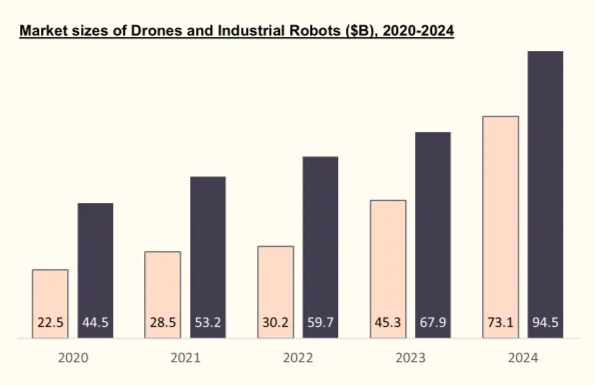

One can also draw similar conclusions when looking at the large and rapidly growing market sizes for industrial robotics and drones, respectively currently estimated at $94.5B (20.1% CAGR) and $73.1B (34% CAGR).

Sources: Grand View Research, Zion Market Research, Spherical Insights, Precedence Research

With the robotics space is experiencing very encouraging traction on several fronts, the foundations for a GPT moment happening are definitely being set given the flow of sophisticated capital and talent flowing towards the space.

2. The Intelligent Robotics Ecosystem

The Robotics AI Ecosystem

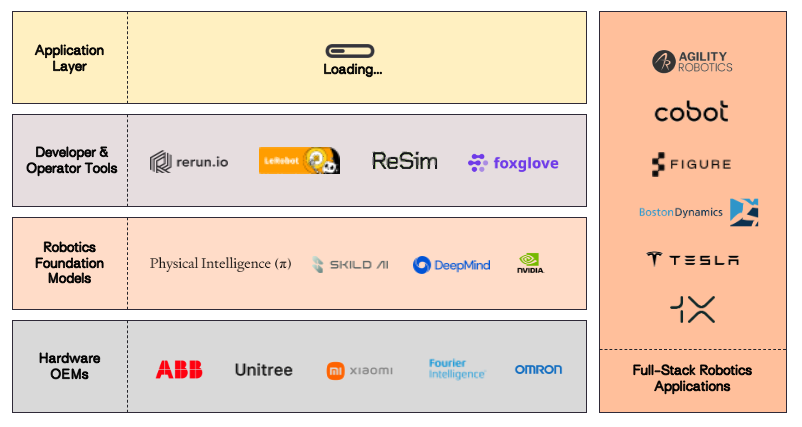

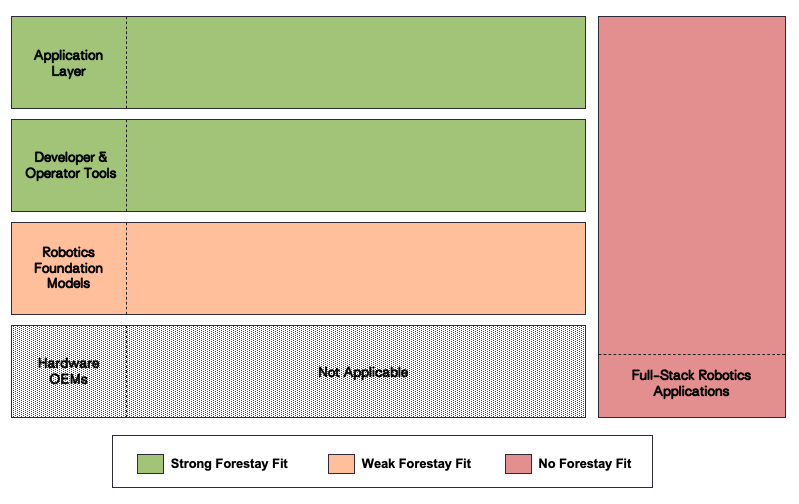

The budding Robotics AI ecosystem bears notable similarities to the Enterprise AI ecosystem we’ve previously explored. Both share a fundamental structure: an application layer built atop a tooling layer, which itself rests on a foundation model layer. However, significant differences exist between these ecosystems. The robotics landscape places greater emphasis on hardware OEMs as critical components. Additionally, full-stack solutions combining both hardware and software capabilities appear to be emerging as a dominant business model in robotics applications.

While both ecosystems aim to leverage AI for transformative applications, robotics focuses on physical-world interactions through hardware-software synergy, whereas enterprise AI is centred around optimising digital workflows using LLMs.

In the following sections, we will explore each of the ecosystem’s layers in some more detail and evaluate their respective fits with Forestay’s thesis.

2.1. Foundation Model Layer

Much like the Foundation Model Layer in LLMs, the foundation model layer in robotics is comprised of companies working on generalizable models for robotics. These foundational models aim to enable zero shot capabilities (perform tasks without prior specific training) and gives robots the ability to generalize their knowledge to novel cases – importantly allowing them to adapt to new unstructured settings.

While Foundational Models for virtual intelligence (e.g. LLMs) and Foundational Models for physical intelligence (e.g. Vision Language Action Models (VLAMs)) occupy similar positions in their respective ecosystems, they also differ in meaningful ways:

| Category | Virtual Intelligence | Physical Intelligence |

|---|---|---|

| Example | LLMs (ChatGPT, etc) | VLAMs (Sereact, Skild AI, etc) |

| Data Types | Information only (Image, Text, Audio) | Force, Kinematics (etc). Information + energy, power, etc |

| Data Collection | Collected from web (existing) | Tele-operations, simulations, etc (needs to be collected) |

| Cost of Failure | Low: Human in the loop, often subjective answers | High: Full autonomy, many ways to fail |

| Action | Image, Text, Audio (not time critical) | Force, kinematics, etc (time critical) |

The above chart provides a comparison between Virtual Intelligence and Physical Intelligence, highlighting their distinct characteristics and applications. Virtual Intelligence is centred around processing information-based data, such as images, text, and audio, which are often sourced from the web or other pre-existing datasets. Its operations are relatively low-risk because human oversight typically plays a key role in decision-making, ensuring that errors can be caught and corrected. Additionally, the actions driven by Virtual Intelligence are not time-sensitive, focusing more on tasks that don’t require immediate physical responses.

On the other hand, Physical Intelligence operates in a completely different domain. It deals with highly complex data types like force, kinematics, energy, and power – data that must be collected in real-time using advanced methods such as tele-operations or simulations. The stakes are much higher in this realm because Physical Intelligence often relies on full autonomy, meaning that failures can lead to significant consequences. Moreover, its actions are inherently time-critical and involve precise physical interactions, such as applying force or controlling movement. This contrast underscores the unique challenges and roles of these two forms of intelligence in modern technology.

2.2 Developer & Operator Tools

The Developer & Operator tools layer of the ecosystem can be roughly split into two categories: The Operations and Maintenance layer – which ensures that robots are reliable and effective once they’re out in the world, and the Developer Tools and Datasets layer, which lays the groundwork by equipping developers with everything they need to build smarter, more capable robotic systems.

Developer Tools & Datasets

The Developer Tools and Datasets layer focuses on the creation and refinement of robotic systems before they are deployed. This is where developers design, test, and train robots to perform specific tasks. Tools like simulation environments (e.g., NVIDIA Isaac Sim) play a crucial role here by providing virtual spaces where developers can safely experiment with different algorithms or hardware setups without risking damage to physical robots. These simulations allow for extensive testing under various conditions, helping developers fine-tune their systems before real-world deployment. In addition to simulation tools, this layer includes software development kits (SDKs) that provide pre-built libraries and frameworks to make programming robots easier. Middleware platforms like ROS (Robot Operating System) further simplify development by standardizing how different components of a robot communicate with each other. Finally, datasets are critical for training robots—whether it’s teaching them how to navigate complex environments or perform specific tasks using machine learning techniques like reinforcement learning. These datasets provide the raw material that allows robots to learn from examples or past experiences.

Operations & Maintenance

The Operations and Maintenance layer is all about ensuring that robots function smoothly and efficiently in real-world environments after they’ve been deployed. This involves managing their day-to-day operations, monitoring their performance, and addressing any issues that arise. For example, if a robot in a warehouse encounters an obstacle or malfunctions, this layer ensures there are systems in place to troubleshoot and resolve the problem quickly. It also includes preventative maintenance—regular checkups and updates to keep the robots running optimally and to minimize downtime. For organizations using fleets of robots, this layer often involves tools for fleet management, which help coordinate multiple robots to work together seamlessly, schedule tasks intelligently, and integrate with broader business systems like inventory or logistics software. Additionally, this layer collects data from the robots’ operations, which can be analyzed to identify inefficiencies or areas for improvement, ensuring continuous optimization.

2.3 Full Stack Robotics Applications

In contrast to the other layers, which “stack” on top of each other, some companies have opted for a “full stack” or vertically integrated approach – creating end-to-end solutions by combining custom-built hardware with proprietary software, ensuring seamless interaction between the two. These companies don’t rely on off-the-shelf components or third-party software; instead, they develop their own robotic hardware — such as actuators, sensors, and processors — alongside specialized software for control, navigation, and task execution. By doing so, they can fine-tune every aspect of the system to meet specific use cases.

For instance, companies like Boston Dynamics and Tesla exemplify this approach by building robots that are not only physically capable but also powered by advanced AI-driven software tailored to their hardware. This vertical integration allows these robots to perform complex tasks such as autonomous navigation, object manipulation, or even human-like movement with precision and efficiency.

The advantage of this model lies in its ability to address unique challenges that arise from the interplay between hardware and software. For example, in autonomous vehicles or humanoid robots, computational workloads are highly specialized and require custom solutions that off-the-shelf components cannot provide. By integrating both layers, these companies can optimize for cost efficiency, safety, and reliability while scaling their systems for broader applications.

Expert Call

“This is what we used to call the Apple versus Android question. Apple does the software and the hardware together and has big advantages, and then you can think of Android as a (software only) Operating system. […] I’m sure robotics AI companies would love to just say, hey, we’ve got the operating system and all the smarts to run your humanoid robot. A lot of companies want to do that, but I’ve never seen it work. The next phase of robotics is going to be even harder than what I’ve seen them apply it to in the past because humanoids are as hard as it gets. I think software only companies developing Operating Systems are going to struggle.”

2.4 Application Layer

The application layer of the robotics AI stack is beginning to crystallize as workflow‑specific intelligence tightly coupled to real‑world deployments. Early leaders such as Sereact illustrate that this layer does not resemble traditional end‑user software, but rather a domain‑specific orchestration layer that translates business intent into reliable physical execution.

In contrast to classical robotics stacks – where application logic is hard‑coded and brittle – this emerging layer is increasingly powered by generalized robotics foundation models trained on large volumes of real‑world interaction data. In Sereact’s case, the application layer sits between warehouse management systems (WMS/WES/WCS) and standardized robot hardware, receiving task context (orders, SLAs, exceptions) and autonomously planning, executing, and validating physical actions such as pick‑and‑place across heterogeneous environments.

Crucially, these applications are use case‑first rather than horizontal. Instead of generic “robot apps,” they encode deep understanding of specific industrial workflows – e.g., warehouse pick & place, bin handling, exception recovery – allowing robots to operate in unstructured, high‑variance settings without per‑site reprogramming. This mirrors the evolution of Enterprise AI, where value has accrued not at the model layer alone, but in applications that embed domain knowledge, integrations, and operational feedback loops.

Over time, we expect the robotics application layer to converge on a small number of high‑value industrial domains—manufacturing, logistics, and healthcare—where labor intensity, variability, and economic pressure justify continuous learning systems. The defensibility of these applications will stem less from UI or control logic, and more from deployment density, data flywheels, and learning velocity, as each real‑world action incrementally improves performance across the installed base.

2.5 Full Stack Approach

Full-stack robotics applications present a compelling approach, given the critical role of hardware and the advantages of native software-hardware integration. However, this approach is less favourable for startups due to its capital-intensive nature and significant upfront investment in infrastructure. Companies with existing manufacturing capabilities — such as Tesla and Xiaomi — are likely to have a competitive edge over startups in this domain.

Forestay Fit Evaluation for the Robotics Ecosystem

3. Key Use Cases & Form Factors

While robotics remains a fairly nascent segment within the realm of technology, certain types of robots are and have been operating in production for decades. In this section, we’ll explore the main industrial applications, types of robots, and assess their respective levels of maturity.

3.1 Primary Industrial Applications

- Manufacturing & Production: Industrial robot arms are extensively used for high-precision, repetitive tasks such as welding, painting, assembly, and quality inspection. These robots are typically large, fixed systems integrated into production lines, enabling high throughput and consistent product quality. For example, in automotive manufacturing, robot arms perform spot welding on car bodies with micron-level accuracy, dramatically increasing efficiency and safety compared to manual labor

- Material Handling: Both industrial robots and mobile autonomous robots are used to move, load, and position raw materials, components, or finished goods. These systems automate processes like palletizing, sorting, and machine tending, reducing cycle time and manual strain. For instance, robotic palletizers in a packaging plant can handle thousands of units per hour, improving throughput while minimizing errors and worker injuries

- Logistics and Warehousing: Autonomous mobile robots are widely deployed to transport inventory, perform order picking, and restock shelves. Equipped with navigation systems and integrated into warehouse management software, these robots optimize fulfilment speed and space utilization. A leading example is Amazon’s Kiva robots, which autonomously transport shelving units to human pickers, reducing retrieval times and floor space requirements.

- Maintenance & Utility: Mobile and climbing robots, including drones and quadrupeds, are increasingly used for inspection, cleaning, and minor repairs in hard-to-reach or hazardous environments. These robots improve uptime and reduce human exposure to risk. For example, quadruped robots like Boston Dynamics’ Spot are used in oil and gas facilities to autonomously inspect pipelines and equipment, capturing thermal and visual data while navigating uneven terrain without the need for scaffolding or shutdowns.

3.2 Form Factors

Picking Arms (Industrial Robot Arms, Collaborative Robot Arms)

- Standard Industrial Robot Arms: High-speed, high-precision machines primarily used in manufacturing sectors such as automotive, electronics, and metal fabrication for tasks like welding, painting, and assembly. Their strength, repeatability, and ability to operate continuously make them ideal for mass production, though they require safety barriers and complex integration. This type of machinery operates on the basis algorithms enabling them to perform few and highly specific tasks, without the assistance of any type of artificial intelligence. Leaders in the space include the likes of ABB, Fanuc, KUKA, Universal Robots and Denso Robotics

- Collaborative Robot Arms (Co-Bots): In contrast, Co-bots are engineered to safely operate alongside humans without cages, using force-limiting sensors and intuitive programming. Common in light manufacturing, logistics, and lab automation, their key advantage is flexibility and ease of deployment. However, in order to accommodate for the increase of safety these machines trade off speed and payload capacity, as they are built with force-limiting joints, lighter structures and programmed with lower maximum speeds, all limiting the amount of force and velocity they can apply.

Autonomous Robots

Autonomous robots, including mobile robots and drones, navigate and perform tasks independently using AI and sensor fusion; they are widely used in warehouses, agriculture, and healthcare for functions such as material transport, inspection, and monitoring. Their adaptability is a strength, although the following things limit their performance:

- High costs: Advanced software and hardware required to enable the likes of self-navigation, perception and decision-making. The reliance on a suite of sophisticated sensors such as LiDAR, cameras, GPS and inertial measurement units, paired with powerful onboard computing for real-time data processing and AI-based control algorithms

- Complex Environments: Unpredictability and variability within environments still very much challenge autonomy systems. Factors such as dynamic obstacles (i.e. a kid chasing a ball on a road), poor lighting, uneven terrain and electromagnetic interference can impair sensory accuracy and therefore, task execution. These robots are designed to perform in controlled environments such as warehouses where all environmental settings and variables can remain constant

Quadrupeds

Quadrupeds, four-legged robots designed for stability on uneven terrain, are uniquely suited for industrial inspections, defense, and construction, where human access is limited. They offer superior mobility but have limited payload and shorter battery life. Boston Dynamics are some of the clear leaders in that field with their Quadruped named “Spot” which specializes in the assistance of site monitoring and operations.

Humanoids

Humanoids mimic human form and behavior to interact intuitively in service, retail, and research environments. As previously mentioned, while they represent the frontier of human-robot interaction, their high cost, limited durability, and technical challenges currently constrain widespread commercial deployment. Nonetheless, a few companies such as Engineered Arts, Unitree, Honda and Figure AI are leading the charge and have released prototypes of highly impressive Humanoids.

4. Key Challenges

4.1 General Challenges in Robotics

As previously mentioned, while progress within the field of robotics is being made, there are several key challenges hindering transformational progress.

Limitations of existing robots:

- Limited usability and versatility: The majority of robots in operation have been designed to be great at performing one (in some cases a bit more) task(s) while totally unable to perform anything else

- Expensive: Modern robots are highly sophisticated and capex intensive pieces of equipment that mainly benefit corporations with the largest budgets

- Complicated logistics: For the most part, industrial robots embody heavy machinery, sometimes fixed (and thus immovable), requiring continuous maintenance, software updates and adjustments

- Low user-friendliness: Highly sophisticated machinery requiring specific knowledge and training to operate. There is also a software challenge that makes the management of a fleet of robots from different providers highly complicated across a fragmented software stack

Technical challenges hindering progress:

- Gap between research and production: Academic advancements in robotics have been considerable, but applying it in practice has consistently failed to follow. The combination of cost, time, talent and facility requirements makes it tremendously complicated to bring these theories into the real world. In addition, extreme reliability requirements exacerbate that gap as academic research often doesn’t foresee all production bottlenecks, which have to be solved subsequently through lengthy and costly testing and iteration processes.

- Hardware requirements and cost: The more tasks expected from a robot, the higher the hardware requirements. Taking the extreme example of the humanoid, one can simply imagine the amount of highly sophisticated hardware required on every inch of the body to enable it to complete a few mundane tasks such as pouring a glass of water. Looking at the cost, complexity of development requirements, ultimately resulting in a questionable business case, it becomes clear why the dream of a humanoid coming close to human capabilities remains distant.

- Data: In order to achieve the end goal of the perfectly intelligent robot, the data chasm must be solved. Simply put, a robot would require “all the data in the world” in order to reach that state. Data is scarce, data collection is expensive and while several companies are attempting to solve this problem and foundational AI models will increasingly be able to assist on that front, the issue remains a considerable blocker to progress today.

4.2 Challenges in Robotics AI

Precise “Action Language” does not exist yet

One of the key challenges of AI in robotics — distinct from the way large language models (LLMs) are trained — is that human physical skills rely heavily on subconscious actions we neither fully understand nor have the language to describe. Because we often perform these movements without conscious awareness, we lack a precise vocabulary to capture them in detail. This absence of a structured “action language” makes it difficult to quantify and tokenise movement for AI training in VLAMs. Fundamental research is needed (and currently being conducted) to bridge this gap.

Data scarcity is limiting foundation model training

Expert Call: Senior Director of Simulation Technology

“When you have a specific problem that you need to solve, like if, for example, you’re trying to understand what the weeds in a field look like to automate the weed spraying machine that’s going to be dragged across it, you have to reproduce that data. The problem is it’s really expensive to go out and gather real-world data. If I need weeds at every stage of their lifecycle development, what am I going to wait, take an image every hour on every patch of my field in order to understand everything that’s growing out? There must be a better way. You need tools that create data that can at least get these models most of the way towards what they need to do.”

Another key challenge is that robotic-specific data, and 3D data in particular, is scarce compared to the internet-scale text and image data used to train large language and vision models. This remains a crucial limiting factor to robotics having a ChatGPT-like moment and is a highly important academic research area. Some methods being tested to bridge this data gap include:

- Training using unstructured “play data” and unlabelled videos of humans: Language-conditioned learning, such as language-conditioned behavioural cloning or affordance learning, relies on large annotated datasets. To scale learning, some researchers have proposed using tele-operated human-provided play data instead of fully annotated expert demonstrations. Play data is unstructured, unlabelled, inexpensive to collect, and rich in diversity, as it does not require scene staging, task segmentation, or resets.While promising, a key limitation of existing approaches to training in robotics is that a robot’s physical skills are constrained to the distribution of movements present in its training data, preventing it from generating new motions. To overcome this limitation, one approach involves leveraging motion data from videos of humans performing various tasks. The motion information extracted from these videos can then be used to enhance the robot’s ability to acquire and generalize physical skills

- Data augmentation using diffusion models: Collecting robotics data requires real-world interaction, making the process costly (and potentially unsafe). One approach to address this challenge is leveraging generative AI, such as text-to-image diffusion models, for data augmentation to introduce novel objects, backgrounds, and distractors into robot manipulation datasets with textual guidance

- High fidelity synthetic data generation via simulation: High-fidelity simulation using game engines offers an efficient way to collect data, particularly for multimodal and 3D perception tasks in robotics. Simulation-based data collection enables the generation of multimodal sensor data with precise ground truth labels, such as stereo RGB images, depth maps, segmentation masks, optical flow, camera poses, and LiDAR point clouds (etc).

Most robotics applications face a “99.X” problem

The “99.X problem” highlights a major challenge in deploying robot learning in real-world settings: most applications require near-perfect reliability — typically 99% or higher — which current models can’t achieve. While academic results often report around 80% success, pushing beyond that becomes exponentially harder, with each incremental improvement requiring significantly more effort. Vincent Vanhoucke of Google Robotics & Waymo argues that reaching 99.X% performance isn’t just a matter of scaling existing approaches — it likely demands entirely new methods. Even today’s most advanced AI models in vision and language, like GPT-4V, don’t reach that level of reliability on unfamiliar tasks, and robotics is even further behind.

4.3 Lengthy Timelines

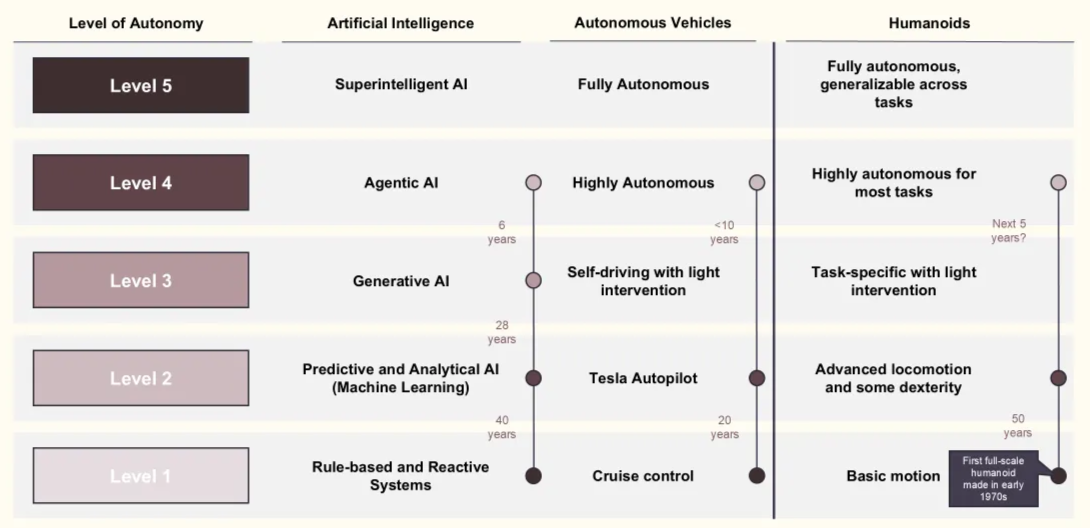

We’ve alluded to the vision of the fully autonomous humanoid and the underlying limitations to full scale commercial deployments, and looking at estimated timelines, it becomes clear that the combined fields of AI, Data and robotics still have a long way to go. The figure below is a modified representation of a timeline towards the fully autonomous Humanoid (initially published by Coatue), comparing stages of development in AI & Autonomous vehicles on the basis of Levels of Autonomy (From 1-5) and estimations of how many years it will take for each field to get to the next stage.

Levels of Autonomy reached and estimations for AI, Autonomous Vehicles and Humanoids (Source: Coatue, modified by Forestay)

Looking at these, we can draw the following conclusions:

- It took significant amount of time and progress for humanoids to reach more advanced locomotion capabilities and dexterity, a staggering 50 years since the production of the first full scale humanoid

- Today, Humanoids sit on the low end of Level 3 and even these remain prototypes far too expensive and unreliable for mass commercialisation

- Coatue estimates we will have highly autonomous humanoids, able to perform most tasks, are about 5 years ahead. However, our research yields a more pessimistic view here as several experts claim that hardware and software/data constraints will require much more time to be solved to allow a humanoid to perform a vast array of tasks

- Fully autonomous intelligent humanoids are so far away into the future, it is very difficult to estimate a timeline. Due to the 99x problem (mentioned above), we believe that the timeline of progress for many/most intelligent robotics will look more like AVs. However a subsection of intelligent robotics applications requiring less reliability out of the box could come sooner although it remains difficult to estimate when that could be

5. Conclusion

As outlined throughout our research, the core constraint holding back intelligent robotics is not hardware capability or theoretical model architectures, but data. Unlike language or vision, robotics lacks internet‑scale datasets: real‑world interaction data is scarce, expensive to collect, and difficult to simulate with sufficient fidelity. This data gap, combined with the “99.X problem” – the need for near‑perfect reliability in physical environments – means that progress in robotics AI is fundamentally bottlenecked by learning from real‑world execution rather than research alone.

While significant capital is flowing into robotics foundation models, our research highlights that many of these efforts remain research‑heavy and deployment‑light, with long and uncertain timelines before they reach production‑grade reliability. In contrast, the strongest signals of near‑term value creation are emerging in software‑led, application‑layer systems that are already embedded in live industrial workflows and can compound learning through continuous use.

Against this backdrop, Forestay’s approach is to actively prioritise software‑first, hardware‑agnostic opportunities that directly address the data bottleneck by design. These are platforms that run on existing industrial hardware, operate in production environments today, and generate proprietary datasets as a by‑product of customer adoption. By coupling real‑world deployments with tight feedback loops, such companies are uniquely positioned to improve reliability over time and to build defensible data moats that cannot be replicated through simulation or capital alone.

References and Resources:

https://press.airstreet.com/p/sereact-series-a?utm_source=substack&utm_medium=email

https://www.technologyreview.com/2024/04/11/1090718/household-robots-ai-data-robotics/

Coatue_ThePathToGeneralPurposeRobots.pdf

https://www.technologyreview.com/2025/01/23/1110496/whats-next-for-robots/#:~:text=All

https://www.youtube.com/watch?v=hL_GKapQd1k&ab_channel=Weights%26Biases