In 1997 a software developer called Eric Raymond first published his essay “The Cathedral and the Bazaar”, sharing his views on open source software development and why it should be done as openly as possible.

- The “Cathedral model” refers to source code being available with each software release, but code developed between releases is restricted to an exclusive group of software developers – a traditional release cycle even now in enterprise SaaS

- The “Bazaar model” refers to code that is developed online in view of the public and Eric Raymond credits this approach to Linus Torvalds, leader of the Linux kernel project, as the inventor of this process – this goes on to become the open source movement today

Eric Raymond coins the term Linus’s Law:

Given enough eyeballs, all bugs are shallow.

In other words, the more widely available the source code is for public testing, scrutiny and experimentation, the more rapidly all forms of bugs will be discovered. By contrast, working in silo in the Cathedral model means a disproportionate amount of time and energy spent on hunting for bugs.

For enterprise software investors and operators, understanding this open source dynamic is critical. The same forces – transparency, community iteration, and distributed innovation – are now reshaping not only infrastructure software, but increasingly AI and agentic systems as well.

1. Defining OSS

Open source software (OSS) has evolved from a niche philosophy into foundational infrastructure for modern B2B software. OSS refers to software with freely accessible source code that can be viewed, modified, and redistributed for commercial or non-commercial use.

Understanding OSS is not merely technical—it is strategic. The open source ecosystem is built on three core principles: transparency, collaboration, and decentralization. These principles drive lower adoption barriers, faster innovation cycles, and community-led growth—often reducing customer acquisition costs relative to proprietary models.

For enterprises, OSS delivers:

- Flexibility and customization

- Access to cutting-edge innovation

- Reduced vendor lock-in

- Lower licensing costs

- Strong ecosystem support

At scale, OSS also enables faster development cycles, seamless integration, and operational resilience—critical advantages in competitive B2B markets.

2. Why would an enterprise procure from an open source provider?

Enterprise “procurement” of OSS typically means formalizing adoption through a commercial vendor that provides support, compliance, and enterprise-grade tooling around an open core.

Enterprises adopt OSS for several strategic reasons:

- Cost efficiency – Lower licensing costs vs. proprietary software, with budgets redirected toward customization or support.

- Enterprise-grade support – Vendors (e.g., Red Hat, SUSE, MongoDB Inc.) provide SLAs, security patches, and long-term maintenance.

- Compliance & security – Certified, regularly updated software aligned with regulatory standards.

- Faster time-to-value – Migration support, integrations, tooling, and training accelerate deployment.

- Customization without lock-in – Flexibility to extend software without being bound to a single vendor’s roadmap.

In short, enterprises seek the innovation and flexibility of OSS—with the reliability and accountability of commercial support.

Case study: n8n — From Open-Source Core to Enterprise Favourite

n8n (founded in 2019, Berlin) occupies the middle ground between lightweight automation tools like Zapier and complex, engineer-heavy orchestration platforms. It combines low-code usability with deep extensibility and self-hosting—ideal for enterprises that have outgrown basic trigger-action workflows but don’t want to build infrastructure from scratch.

Originally launched as a workflow automation tool, n8n has repositioned itself as a universal AI automation layer, connecting LLMs with traditional SaaS and data systems. This AI-first framing has accelerated adoption among enterprises looking to operationalize AI across multiple workflows.

The open-source community edition allows teams to self-host and experiment without procurement friction or usage-based pricing. This has fueled a strong ecosystem—thousands of templates and 1,000+ integrations—creating compounding product value and discoverability.

n8n’s GTM strategy prioritizes community-led, bottom-up adoption rather than traditional top-down sales. Teams deploy the product organically; as workflows become mission-critical, they effectively pre-sell the enterprise version internally before formal procurement begins.

The enterprise offering monetizes what the open core cannot easily provide:

- Advanced security and governance

- Observability and auditability

- Multi-user collaboration

- High-availability and reliability at scale

This creates a natural upgrade path: maintain open-source flexibility while adding enterprise-grade controls and support. Customers include Microsoft, Vodafone, and SEAT.

Importantly, n8n avoids aggressive usage-based billing common in closed automation tools. Its pricing is structured to remain economical at scale, making it attractive for organizations running high volumes of automations. Combined with internal champions from the open-source base, this lowers friction when standardizing on n8n enterprise-wide.

| Factor | How it worked for n8n | Enterprise impact |

|---|---|---|

| Open‑source core | Free, self‑hostable, extensible platform with rich community templates and integrations. | Drives organic, bottom‑up adoption and de‑risks vendor lock‑in concerns. |

| Community‑led growth | Focus on contributors, content, and usage instead of gated leads. | Creates in‑house champions who later advocate for enterprise standardization. |

| Clear enterprise SKU | Added governance, security, observability, and collaboration on top of the core. | Gives CIOs and security teams a reason to buy without disrupting existing workflows. |

| Cost and flexibility | Workflow‑friendly pricing and multi‑deployment options vs. usage-based SaaS pricing. | Makes large‑scale automation economically viable, encouraging consolidation on n8n. |

| AI orchestration focus | Positioning as a “universal AI automation layer” connecting LLMs and systems. | Aligns directly with current enterprise priorities around AI enablement with governance. |

3. Deep Dives

3.1. Cloud infrastructure

The OSS infrastructure landscape has reached a critical inflection point. The foundational battles – Kubernetes versus alternative container orchestrators, Linux versus proprietary operating systems – have been decisively won. The installed base is now enormous, stable, and largely commoditized.

The opportunity now lies above the commodity layer, where complexity, cost, and operational overhead create room for value creation. We assess the space across four layers: Orchestration & Compute, Data Infra & AI, Platform play and Emerging Runtimes.

i) Orchestration & Compute — The Commodity Layer

Kubernetes is now the de facto container orchestration standard. Docker, KVM, Firecracker, and related virtualization technologies underpin nearly all modern cloud environments.

The core orchestration battle is over. The opportunity is in making Kubernetes usable, cost-efficient, and manageable for enterprises.

Areas of interest:

- FinOps & cost optimization – Tools reducing Kubernetes and cloud infrastructure spend

- Kubernetes abstraction layers – Unified multi-cloud/on-prem/edge management

- Cost visibility tooling – Platforms explaining and optimizing cluster-level resource allocation

The alpha is no longer in orchestration itself – but in operational intelligence layered on top.

ii) Data Infrastructure & AI — The Alpha Layer

Data infrastructure is experiencing a second wave, driven by generative AI.

- Vector databases and AI foundations: Projects such as Milvus, Weaviate, and Qdrant enable semantic search via embeddings—foundational for Retrieval-Augmented Generation (RAG). LLMs are stateless; vector databases provide memory and enterprise data access.

- Streaming & event infrastructure: Kafka, Redpanda, and Pulsar power real-time data pipelines. Increasingly critical for AI-enabled fraud detection, monitoring, and personalization.

- AI-optimized databases: Specialized storage systems tailored to embedding search, low-latency inference, and approximate nearest neighbour workloads.

- Streaming platforms with ML integration: Reducing complexity of building real-time ML pipelines.

This layer remains dynamic and investment-attractive.

iii) Platform Engineering & Developer Experience — The Efficiency Layer

Internal Developer Platforms (IDPs) abstract infrastructure complexity, reducing developer cognitive load. Open source foundations such as Backstage, ArgoCD, and Jenkins underpin modern IDPs. Backstage is becoming the de facto developer portal standard.

As enterprises scale microservices and Kubernetes, DevOps complexity becomes unsustainable. IDPs invert the model: smaller platform teams enable developer self-service.

Investment themes:

- Pre-built, industry-specific IDPs

- Domain-specific platforms (ML, data, mobile)

- GitOps tooling & infra-as-code orchestration

Efficiency gains in DevOps remain compelling.

iv) Emerging Runtimes — The Blue Ocean Layer

WebAssembly (Wasm) represents a fundamentally different compute paradigm:

- Faster startup times: A Wasm module boots in milliseconds versus seconds for a container, making it ideal for serverless and edge computing where startup latency is critical.

- Smaller footprint: Wasm applications are orders of magnitude smaller than equivalent containers, reducing image storage, transfer, and memory usage.

- Improved security isolation: Wasm’s sandboxing model provides fine-grained capability control, preventing many classes of security vulnerabilities that containers are susceptible to.

- Cross-platform consistency: a Wasm binary runs identically across any Wasm runtime, eliminating the “it works on my machine” problem and simplifying deployment.

WebAssembly remains early-stage and can be seen as an infrastructure bet from an investor standpoint. However, adoption is accelerating. Major cloud providers are beginning to offer Wasm runtimes as first-class primitives alongside containers. Should this new infrastructure play materialize, Forestay should look for application frameworks.

3.2. LLMOps and AIOps

The emergence of models like DeepSeek—freely available, cost-efficient, and highly performant—demonstrates that open AI ecosystems are shaping the next wave of technology. While debates persist within the open-source community—particularly around the lack of transparency in training datasets and whether such models qualify as “truly” open—the practical impact is clear: powerful models are becoming more accessible.

As a result, value creation is shifting up the stack. NLP companies are no longer focused solely on processing text; they are building orchestration, deployment, governance, and monitoring layers around LLMs. In short, the category is evolving into LLMOps.

n8n now sits firmly within this LLMOps layer, having evolved from its origins in cloud infrastructure into a workflow orchestration platform connecting models, tools, and enterprise systems.

Below are the segments we find particularly attractive:

| Segment | Attractiveness |

|---|---|

| Core AIOps platforms | Build cloud‑native, LLM‑enhanced operations platforms that sit atop existing observability, cut (mean time to repair) MTTR, and optimize cost/performance. |

| Vertical / domain AIOps | Specialize in industry‑specific telemetry, runbooks, and compliance; partner with major vendors in those ecosystems. |

| LLMOps platforms | Provide unified model lifecycle management, multi‑model routing, evaluation, and monitoring with strong developer UX. |

| LLM safety & guardrails | Focus on testing, red‑teaming, policy enforcement, and runtime controls for GenAI apps, especially in regulated sectors. |

| Data/feedback & eval tooling | Own the data and feedback layer that drives continual model improvement and quality governance. |

3.3. Cybersecurity

OSS is increasingly relevant across cybersecurity, particularly where modularity, transparency, and extensibility matter.

i) Detection, monitoring and response

Modern SOCs require modular, scriptable pipelines and shared detection content. Attractive areas include:

| Segment | Attractiveness |

|---|---|

| Cloud‑native Security Info and Event Management (SIEM) | SIEM/XDR (extended detection and response) convergence, AI‑driven analytics, and desire to cut tool sprawl. |

| SOC automation | SOCs need automation but lack engineers; OSS connectors reduce build cost. |

| Managed (Endpoint) Detection and Response (M-E-DR) | Fastest‑growing detection/response market with strong SME and enterprise demand. |

| Detection content & intel | Demand for transparent, testable detection rules and threat intel sharing. |

| Cloud/SaaS threat detection | Traditional SIEM tools struggle with modern multi‑cloud and SaaS telemetry. |

ii) Vulnerability, exposure and attack surface management

There remains strong opportunity in modular, cloud-native platforms that unify vulnerability and configuration scanning across containers, Kubernetes, and cloud environments – reducing tool sprawl while enabling risk-based prioritization.

Demand is also growing for open, extensible solutions that detect exposed credentials and sensitive data across repositories, storage systems, and SaaS applications. In parallel, external attack surface management (EASM) – through continuous discovery of domains, subdomains, certificates, and exposed services – is particularly well suited to OSS models, where transparency and extensibility can drive differentiation.

| Segment | Attractiveness |

|---|---|

| Classic vulnerability management | Disrupt with risk‑based, cloud‑native virtual machine (VM) that unifies infra, app, and container scanning plus automated remediation. |

| Attack surface management (ASM/EASM) | Build continuous internet‑facing discovery and misconfiguration detection with tight integration into VM and SOAR; strong fit for open‑core and MSSP channels. |

| Exposure management platforms | Create a unifying risk layer above VM, ASM, CSPM, DSPM, and threat intel with AI‑driven prioritization and board‑level reporting. |

| Managed VM / exposure services | Offer “exposure management as a service” on top of an open or proprietary platform; monetize operations. not just software. |

| Vertical/region‑specific solutions | Specialize detection logic, workflows, and reporting for regulated industries or high‑growth regions, possibly in partnership with local MSSPs |

iii) Application, API and AI security

Forestay has explored this theme extensively as part of Non-Human Identity security and sees the open source community increasingly contributing to this field.

| Segment | Attractiveness |

|---|---|

| Application security (AppSec) | Build integrated, DevSecOps‑native platforms that unify code, dependency, and runtime signals with strong developer UX. |

| API security | Focus on continuous API discovery, behaviour analytics, and runtime protection across multi‑cloud and SaaS. |

| AI / LLM security | Offer LLM‑aware testing, guardrails, and runtime control planes that integrate with software development lifecycle (SDLC) and SecOps tools. |

| Verticalized App/API security | Ship pre‑built policies, templates, and reports that align with sector regulations and cut time‑to‑compliance. |

3.4. Vertical SaaS

Open source has become foundational across enterprises, but its impact is particularly pronounced in Manufacturing, Financial Services, and Healthcare – sectors characterized by legacy infrastructure, regulatory complexity, and high integration needs. In these industries, OSS is not merely a cost lever; it is an architectural enabler.

Manufacturing

Manufacturing’s fragmented ecosystems, legacy OT systems, and multi-vendor environments make open source especially powerful. It allows incremental modernization without large-scale system replacement and reduces dependency on proprietary vendors.

Key opportunity areas:

- Supply chain interoperability: Standardized, open data layers enable real-time information exchange across suppliers, OEMs, and logistics partners. Inter-exchange infrastructure and cross-party operability are attractive themes.

- Predictive maintenance: Open AI frameworks allow asset monitoring across heterogeneous equipment fleets, reducing reliance on vendor-specific systems.

- Compliance as infrastructure: Transparent, audit-ready systems can transform regulatory requirements into a structural advantage.

Financial Services

In financial services, open source has evolved from a cost-saving tool into core strategic infrastructure. Modular, cloud-native architectures reduce vendor lock-in, improve interoperability, and accelerate regulatory alignment.

High-momentum categories include:

- Open Banking & A2A payments: Regulatory tailwinds (e.g., PSD2, Open Finance) drive adoption. Network effects and bank connectivity create defensible platforms (e.g., TrueLayer, Yapily).

- RegTech & compliance automation: Increasing regulatory complexity makes automated compliance mission-critical infrastructure.

- Embedded Finance: API-first platforms enable non-financial companies to integrate financial services, unlocking scalable distribution.

Healthcare

Healthcare combines extreme regulatory requirements with deep interoperability challenges — a natural fit for open source infrastructure.

OSS typically drives adoption at the orchestration, data, and governance layers, while monetization occurs through enterprise-grade security, validation, and compliance.

Two structural areas stand out:

- AI-native drug discovery & bio-computation: Open biological models accelerate target identification and protein design.

- Interoperability infrastructure: Open standards enable ecosystem alignment, while proprietary models capture downstream value.

4. Indicators of open source software success

Measuring OSS success involves tracking that a project has “product-community fit”. Successful open source projects on GitHub can be evaluated on four dimensions: popularity, usage and adoption, community health and activity, and maintainability/velocity.

It is worth noting that while GitHub is one of the most popular places to house OSS projects to encourage discoverability and thereafter collaboration, large companies may run their own Git, or self-host entirely, however, most companies that could be of interest to Forestay would primarily consider GitHub.

| Dimension | Core GitHub metrics | Example KPI diligence questions |

|---|---|---|

| Popularity | Stars, forks, watchers, traffic (views/visitors, referrers) | Is interest growing steadily? How does star/fork ratio compare to peers? |

| Adoption | Used‑by/dependency graph, clones, release downloads | How many other repos depend on this? Are downloads and used‑by trending up? |

| Community | Contributors, new vs. returning contributors, issue/PR counts and flows | Is there a steady flow of outside contributions? Are issues/PRs actively triaged? |

| Maintenance | Commit and release cadence, issue/PR age and closure, maintainer dispersion | Is the project actively maintained with multiple maintainers and reasonable response times? |

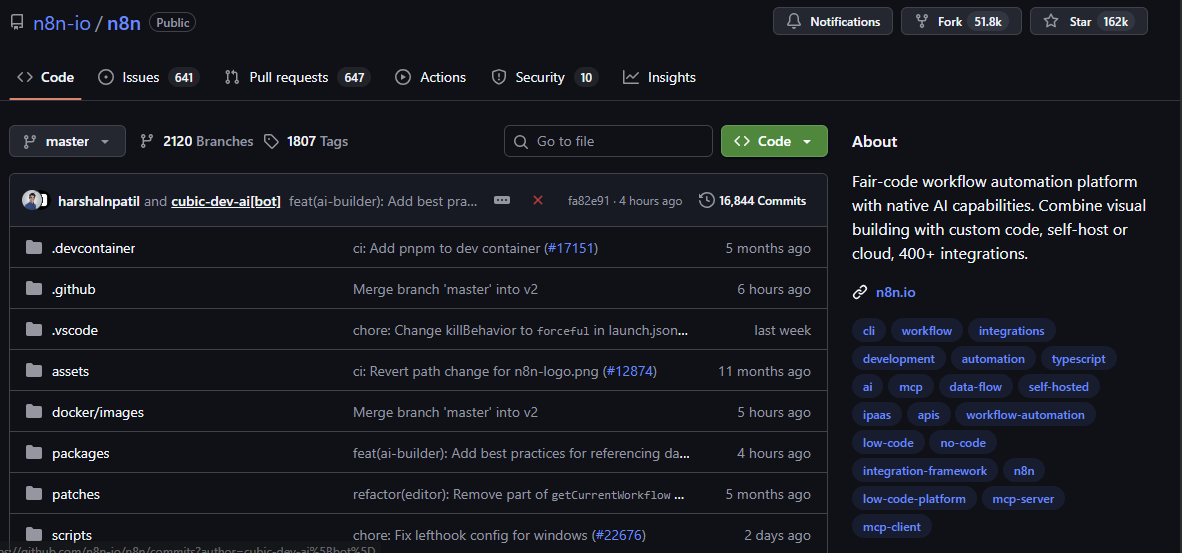

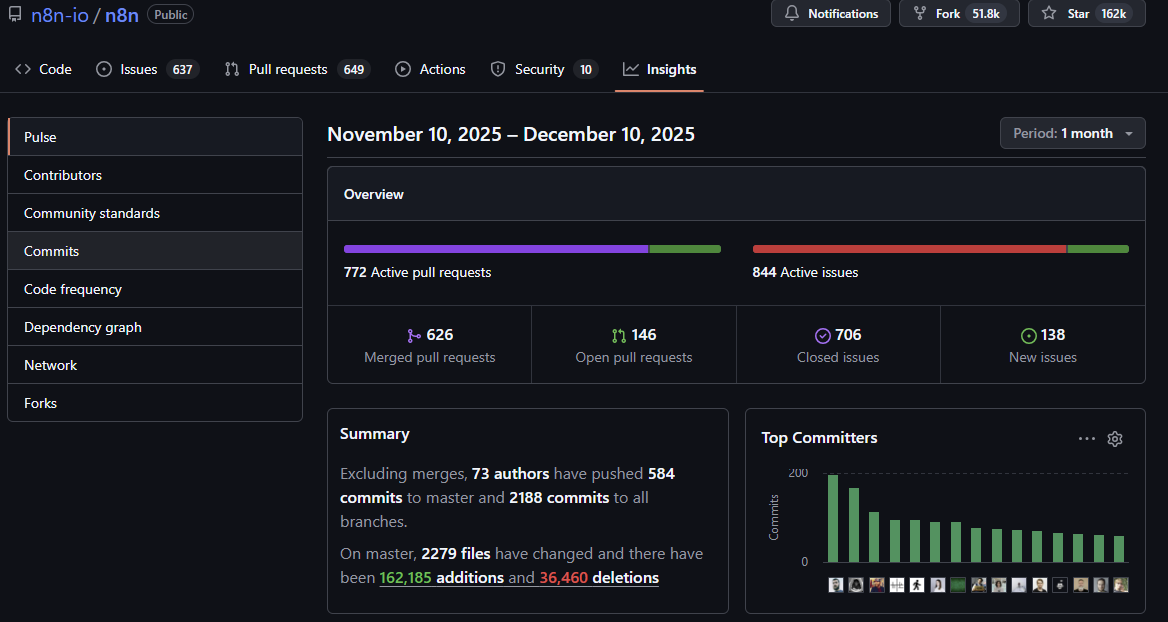

Continuing with the case of n8n, we can understand these metrics in the context of its GitHub repository.

GitHub repository of n8n with indicators of popularity

GitHub repository of n8n with insights around maintenance

5. Companies to watch

| Company | Description | Sector | Country |

|---|---|---|---|

| Cue Labs | Empower teams to manage configuration complexity directly and independently of code - ensuring systems run as intended. | Infra → Orchestration | Switzerland |

| Kestra | Declarative orchestration platform designed for data engineering and software development. | Infra → Orchestration | France |

| FalkorDB | Advanced graph database technology specializing in real-time data handling for AI and machine learning applications. | Infra → Data | Israel |

| Pydantic | Data validation and monitoring platform primarily focused on Python development. | Infra → Data | UK |

| Wundergraph | GraphQL federation platform providing comprehensive API management solutions. The platform offers full lifecycle management for GraphQL APIs, including schema registry, composition checks, analytics, metrics, tracing, and routing. | Infra → Data | Germany |

| Astral | Linter technology designed to make the Python ecosystem more productive by creating powerful development tools. | Infra → Dev | NY, US |

| Daytona | Enables safe execution of AI workflows with native Git and LSP support, allowing developers to run AI-generated code in isolated environments with full programmatic control. | LLMOps and AIOps | NY, US |

| Deepset | AI-powered platform specializing in enterprise natural language processing (NLP) solutions, centered around its open-source Haystack framework. | LLMOps and AIOps | Germany |

| Ultralytics | No-code platform that enables users to create, train, and deploy sophisticated AI models easily. | LLMOps and AIOps | NY, US |

| Netbird | Cybersecurity platform that provides zero-trust network security and simplified virtual private network (VPN) connectivity. | Cyber → Vulnerability | Germany |

| Formance | Programmable database and financial ledger to build enterprise applications. | Vertical SaaS → Financial Services | France |

| Seqera Labs | Bioinformatics company that provides an open-source workflow orchestration platform researchers, specializing in simplifying complex data analysis pipelines in the cloud. | Vertical SaaS → Healthcare | Spain |

6. Conclusions

Open source is no longer a fringe model — it is core infrastructure across cloud, data, AI, cybersecurity and vertical software. For Forestay, this is squarely in scope with our thesis: mission-critical, deeply embedded enterprise platforms. OSS introduces a GTM motion we underwrite less frequently — community-led, bottom-up adoption — but when product–community fit is strong, it can create powerful internal champions and capital-efficient growth.

That said, OSS is not inherently defensible. Monetization must be explicit, the transition from community to enterprise takes time, and openness can compress moats if there is no proprietary layer. The most compelling models combine open distribution with differentiated enterprise capabilities (security, governance, scale, compliance), monetizing trust and reliability rather than code.

Forestay is actively exploringOSS-originated companies, particularly where community traction clearly converts into enterprise standardization and where a proprietary layer compounds defensibility. Discipline is critical — but when executed well, OSS can produce category-defining platforms fully aligned with our strategy.

References and Resources:

- The Cathedral and the Bazaar — Eric S. Raymond (1997)

- n8n — AI Workflow Automation Platform

- n8n GitHub Repository

- The State of Global Open Source 2025 — Linux Foundation

- Open Source: Inside 2025’s 4 Biggest Trends — The New Stack

- Open Source Business Models: Notes on Profiting from Free Software — Generative Value

- What’s in Store for Open Source in 2026? — Eclipse Foundation

- Open Source Technology in the Age of AI — McKinsey

- AI and Open Source Redefine Enterprise Data Platforms in 2025 — Forbes / Moor Insights

- How 100 Enterprise CIOs Are Building and Buying Gen AI in 2025 — a16z

- The Cathedral and the Bazaar — Wikipedia

- Linus’s Law — Wikipedia

- What is Open Source Software? — GitHub

- What Is Open Source Software? — IBM

- Open Source Software Life Cycle — Red Hat

- Awesome LLMOps — GitHub (tensorchord)